Improving automotive manufacturing throughput and profitability with AI & machine learning

Last updated on September 22nd, 2022

In a recent webinar with Microsoft, we were fortunate to hear from Brian Willson, VP of the Midwest Region for Microsoft, Matt Townsend, Managing Director at Riveron, and Thomas Bloor, VP of Global Sales at Acerta.

These automotive experts discussed why culture is key to digital transformations, what real-time analysis actually looks like on the plant floor, and how machine learning leverages the cloud to improve operations. Here are some of the big questions and important takeaways from that discussion.

How Is The Tech Industry Supporting Automation In Auto Manufacturing?

Some compelling statistics: 60% of manufacturers will save 10% of their costs through automation, and 85% of auto executives agree that the digital ecosystem will generate higher revenues than the hardware of the car itself. Manufacturers have every reason to take advantage of automation, but digital transformation doesn’t happen overnight. That level of automation requires a shift in mindset that impacts businesses from end to end.

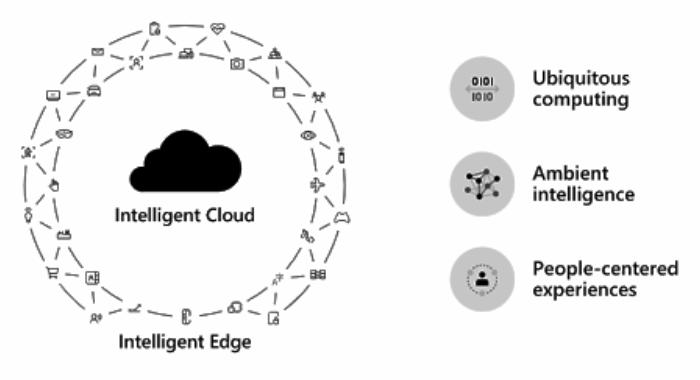

Over the next decade, three main tech trends will drive manufacturing ops:

- Ubiquitous computing

- Ambient intelligence

- People-centered experiences

You hear about the cloud all the time. Through infrastructure that uses both intelligent cloud and intelligent edge, computing will occur ubiquitously (at any time and on any device). If you run deep manufacturing operations with thousands of sensors, and you’re demanding low latency requirements, you need computing power at the edge to connect with your capabilities in the cloud. It’s not just about infrastructure: application consistency, security consistency, and management consistency are all necessary to innovate.

Ambient intelligence and machine learning technologies can solve complex, broad problems in the auto industry, and these broad solutions can then be refined to address more specific use cases.

Regarding people-centered experiences, people always need to be at the center of everything that you do. So, when you interact with your tools and your applications, it’s crucial for your experience to follow you from device to device.

How Does Organizational Culture Support Digital Transformation?

Even a tech company like Microsoft faced resistance when starting to integrate machine learning into their operations. Internal stakeholders who built reputations and credibility on their expertise felt threatened, as if one of the ways they were valued within the company was being replaced by a machine learning tool.

By integrating machine learning in parallel to processes, stakeholders were able to see how Microsoft could automate a lot of their mundane work and place even more value in professional judgment and expertise. Machine learning was also able to find connections that people could not, regardless of their level of experience, which enabled more accurate forecasting.

That’s why some plants fail while other plants succeed using the exact same tech. The end result is driven by the plant’s culture itself. If you don’t deliberately use technology in your everyday activities, the culture will not adapt to it and you will not succeed.

What Does Real-Time Analytics Mean For A Manufacturing Engineer?

This is especially important when considering the aging workforce. Although experienced operators might know when a machine doesn’t sound right, new engineers don’t know what to listen for, or what dials to tweak.

Instead of relying on an experienced operator, you can get feedback directly on the line to make changes to processes. Beyond that, processes have scaled. Job roles that used to manage one thousand square feet of manufacturing line might now manage five or six times that.

The sheer volume of what engineers have to monitor and comprehend is expanding over time, making it impossible to remember the way each and every machine sounds to build up that old-school expertise.

Real time analytics moves decision making to the operational or operator level so data can actually make a difference in your job.

When you use condition-based monitoring, you take a process or machine collecting data and interpret those signals meaningfully. This impacts your ROIs, specifically visibility and decision-making capabilities through real time, data-driven means shifts.

Your process and your machine controls can adapt to change and provide insight back to the operator about a dial that needs to be adjusted or a widget that should be modified.

On top of this, machine optimization analytics enable your machine to make changes on the fly, making your process that much better.

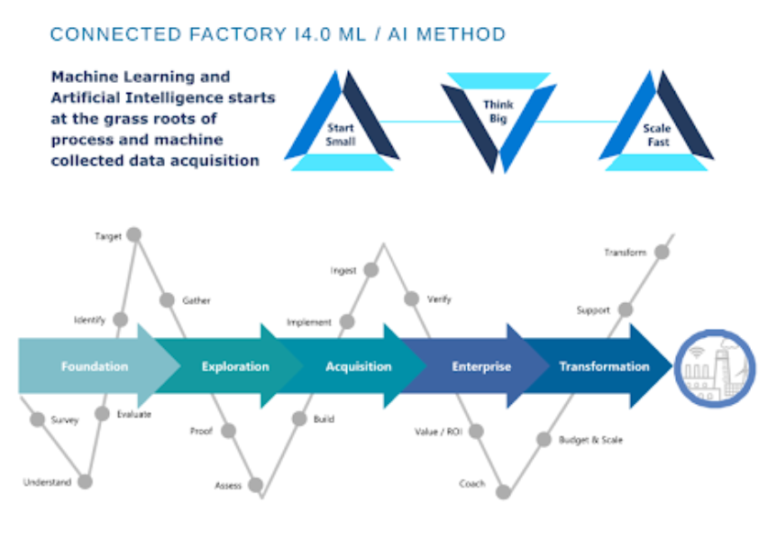

Let’s talk about the tactical approach: What do you need to use machine learning and where do you start?

Think about a small idea that could be scaled across the entire organization. Once you have something that you feel works, think about how fast you could scale it. As long as the data is being collected automatically, you can use process data, machine data, operator data, etc. to identify a problem and target a solution.

Maybe it’s a dimension. Maybe it’s a torque value. Maybe it’s a hole.

It doesn’t matter.

What matters is that you gather relevant data that can be interpreted and used to create a solution.

How Does Machine Learning Leverage The Cloud?

Machine learning is about augmenting decision making on the line so that engineers can make intelligent decisions and consistently crank out the same level of throughput. To implement machine learning, you need data.

Data has become a valuable commodity, and so manufacturers prioritize security. They don’t want their information to leave their own cloud instance or premise.

However, by leveraging Azure’s cloud infrastructure, a tenant space can be set up in the customer’s cloud where external machine learning infrastructure can be deployed. This alleviates security concerns as a manufacturer’s data can be used without ever leaving their cloud instance.

In Acerta’s case, for example, our LinePulse platform’s Advanced Anomaly Detection module uses machine learning to characterize what “normal” is based on the behavior of past signals. Since there are often lots of signals to process, Machine Learning models usually run in the cloud. This is especially useful if you’re looking for a specific failure mode.

If you have line cycle times of thirty to sixty seconds, you need analysis in near real time. That’s why Acerta’s models can be embedded into a virtual machine on an edge device to provide insights for a single unit with low latency.

Intelligent Component Selection, another LinePulse module, is a good example of hybrid deployment where both the cloud and edge are used. Bulk data can be analyzed in the cloud for drift, while AI models are deployed on an edge device within your manufacturing process.

If a gearbox or an axle uses a variable size component for controlling backlash or NVH, Intelligent Component Selection can analyze the data from all prior steps down the line. You can then identify how signals, dimensions, and drift are trending, and better analyze where errors occur and how quality can be improved, instead of just taking a very limited set of measurements from a particular unit.

For one customer, Intelligent Component Selection reduced scrap and rework by 43%. This helped the line run smoother and prevented small micro stoppages, increasing throughput on the line by over 25%.

Share on social: