Detecting anomalies in automotive manufacturing

Last updated on March 15th, 2024

Industry 4.0 has arrived, and automotive manufacturers are collecting more data from the shop floor than ever before. Some have begun to use this data to their advantage by deriving insights to shed light on their production processes and solve complex problems.

One way automotive manufacturers have gained these insights is by using machine learning to detect anomalies in the data. Anomaly detection is the process of identifying data points, observations, or events that deviate significantly from the dataset’s overall pattern or expected behavior.

But what about statistical process control (SPC)? Can’t I control my process with SPC?

If that were true and SPC alone could control quality and solve all problems… then defects would no longer be a problem for any manufacturer.

While there is still a place for SPC on the shop floor, anomaly detection is a more adaptable, flexible tool than SPC that automotive manufacturers now have as an option to keep their processes running smoothly.

Instead of relying on fixed, predefined control limits like SPC, anomaly detection is fluid. It monitors for changes from the process norm, which allows it to detect subtle changes and trends that SPC cannot easily detect. Anomaly detection can also be used to monitor multiple signals together, which helps understand relationships between different variables on the line. SPC on the other hand, sticks to one signal at a time.

Solving automotive manufacturing problems

Using anomaly detection can solve a wide variety of problems for automotive manufacturers depending on the need and the data available. Here are some examples:

- Improving quality metrics such as scrap rate, reducing rework, and first time through

- Reducing downtime for machines and improving OEE

- Improving process design and optimization

- Reducing time needed for product testing

- Mitigating supply chain disruptions

- Optimizing energy use

Succeeding with anomaly detection in automotive manufacturing

Implementing an effective machine learning anomaly detection solution in an automotive manufacturing environment requires two key things:

1. A large automotive manufacturing dataset

Most automotive manufacturers use a system of sensors connected to the machines on their production lines. These sensors generate “signals”, which represent measurements from different aspects of the operations in the manufacturing line. The signal data from these sensors is usually aggregated in a central database. Other sources of data that impact manufacturing outcomes may also be gathered, such as end-of-line test data.

The greater the size of the automotive manufacturing dataset, the greater accuracy there will be in detecting anomalies. Machine learning algorithms require large datasets to make predictions that can be validated. When there is a lot of data to analyze, anomalies will stand out more prominently and be easier to recognize in the future.

The exact amount of data required will differ for every scenario. This article will give you a general idea about how much automotive manufacturing data you need to begin to detect anomalies in it with a machine learning solution.

2. Structured automotive manufacturing data

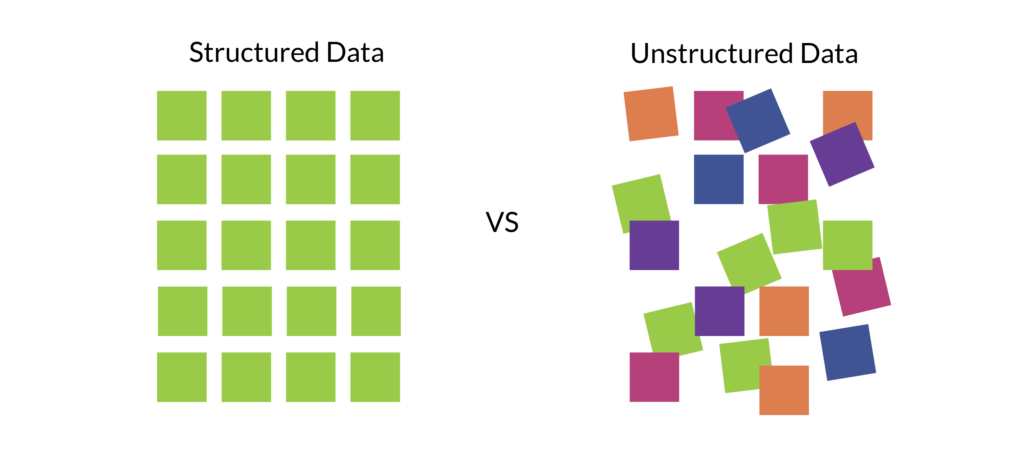

Automotive manufacturing data can be categorized into two types, depending on how it is organized: structured and unstructured.

Structured data is the easiest to work with, as it is clearly labeled and defined. Any anomalous data within a structured dataset is easily identified as an item or event that deviates from the norm. For example, if a scale on an automotive production line is set to signal any units with a mass exceeding 30 kilograms (the threshold), a unit weighing 33 kilograms would send a signal that it has exceeded the threshold, indicating an anomaly.

Unstructured data is much harder to work with. There are no set parameters or definitions of the dataset. An example of unstructured data could come from a JPEG file and be in the form of pixels. It could be a sequence of characters that the machine learning anomaly detection algorithm is unable to identify, making the data unusable until it is structured and labeled.

3. An anomaly detection algorithm or platform

There are a number of methods to detect anomalies in automotive manufacturing data. Machine learning models can be built and deployed to detect anomalies from stored data in a variety of ways by machine learning engineers and data scientists.

For a greater return on investment in anomaly detection, purchasing a real-time predictive quality platform is an excellent solution. A predictive quality platform contains algorithms that have been purpose-build for manufacturing. Some manufacturers may be tempted to build an analytics platform themselves, but it is often more efficient to buy a predictive analytics platform than to build it.

Automotive manufacturing anomaly detection case studies

Acerta has helped several leading precision automotive manufacturers solve manufacturing problems by deploying machine learning models to detect anomalies in their data. Here are some examples.

A major Tier-1 automotive supplier wanted to improve their end-of-line gearbox testing. They needed a more robust way to identify units that were likely to fail under warranty.

To solve this problem, Acerta’s machine learning engineers were provided with a 10 TB, decentralized, and unlabeled dataset that was derived from a very limited number of gearbox test units. This small sample size was very challenging to derive statistically significant results from.

Acerta’s engineers used noise, vibration, and harshness (NVH) testing and anomaly detection to examine the data and identify early indicators of future gearbox failures. The outcome of the project was an 89% accuracy in predicting failure during the warranty period. This resulted in anticipated savings of €2M per plant from reducing their annual warranty costs.

An engine manufacturer needed to improve their vehicle diagnostics by predicting and identifying the causes of their engines failing end-of-line tests across multiple engine testing platforms.

This required a solution that could predict failures from data gathered from five different engine testing platforms, each of which had different test profiles.

The engine manufacturer required two different machine learning approaches be implemented (classification and anomaly detection), and they required the accuracy to be at least 80%. Acerta’s machine learning models identified 100% of the suspect signals in the engine test signal data and exceeded the client’s expectations for accuracy in predicting engine failures by over 93%.

An automotive manufacturer wanted to leverage machine learning on their axle assembly line to identify anomalous data. They needed help locating the source of end-of-line failure in their axle assemblies to reduce the rate of product failure and related rework.

The client’s production lines involved more than 20 different operations that generated over 200 measurements per unit. That’s a lot of data. This made it very difficult to narrow down the source of failed axle assembly tests using SPC data and manual analysis alone.

Acerta implemented LinePulse—adapted and customized to fit the plant network infrastructure—and achieved a 65% reduction in failure and rework rates for the customer. This resulted in massive cost savings and a great improvement in operational efficiency.

Anomaly detection purpose-built for automotive manufacturers

Not to toot our own horn… but Acerta is on the cutting edge of applying machine learning and anomaly detection in automotive manufacturing. We combine machine learning and statistical process control (SPC) capabilities in our predictive quality solution, LinePulse. Using LinePulse, you can quickly and easily detect anomalies in your automotive manufacturing data and solve some of your biggest quality problems.

We’ve worked with Tier-1 and OEM automotive manufacturers such as Linamar, BorgWarner, Ford, Dana, and BMW to help them with some of their biggest manufacturing challenges.

Of course, there are other ways to use machine learning and anomaly detection that don’t use our software. There are many different ways to develop machine learning models and deploy them either using your in-house data science team, or by finding an external data science company to analyze your manufacturing data for you. These solutions are great if you have only one problem to solve, and if the problem is not urgent.

However, if you need an automotive manufacturing-specific platform that detects anomalies in real time, we haven’t seen another solution out there like LinePulse. If you find one, let us know.

Share on social: